What Could Be Done to Reduce Health Care Spending and Improve Health Outcomes? – U.S. Government Accountability Office (GAO) (.gov)

Report on the Sustainable Development Implications of Generative Artificial Intelligence

Introduction: Balancing Innovation with Global Goals

Generative Artificial Intelligence (AI) presents a paradigm shift with the potential to significantly enhance productivity and reshape industries. However, its rapid development and deployment pose considerable challenges to the achievement of the United Nations Sustainable Development Goals (SDGs). This report assesses the environmental and human effects of generative AI, framing them within the context of the 2030 Agenda for Sustainable Development. The analysis highlights critical resource consumption patterns, socio-economic risks, and policy pathways to align AI’s trajectory with global sustainability and human well-being.

Environmental Impact Assessment and Alignment with SDGs

The infrastructure required to train and operate generative AI models is resource-intensive, creating direct tensions with several key environmental SDGs. A lack of transparent reporting from technology companies exacerbates these challenges, hindering effective governance and accountability.

Energy Consumption and Climate Action (SDG 7, SDG 13)

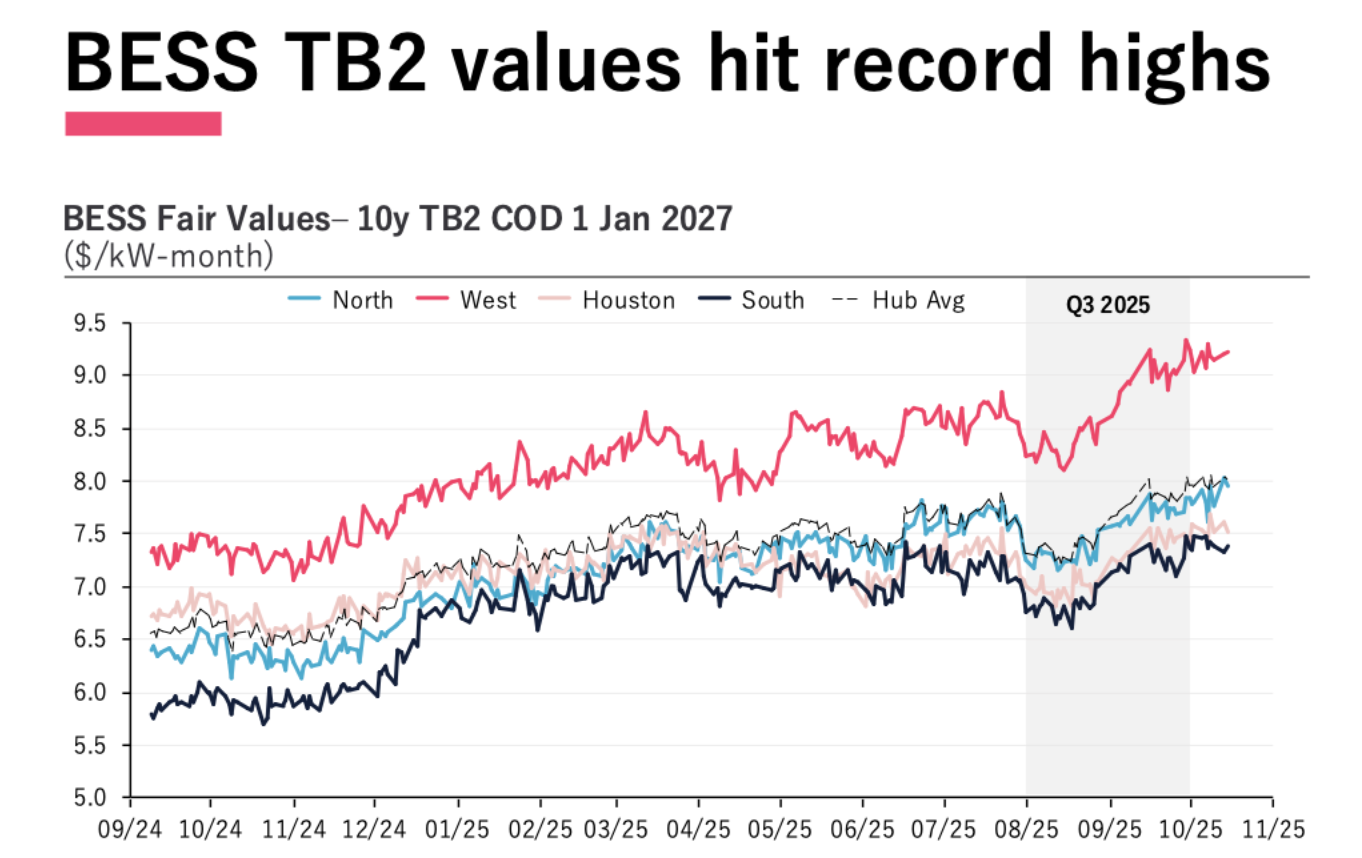

- High Electricity Demand: Generative AI is a primary driver of increasing electricity consumption in data centers. In the U.S., data center demand is projected to rise from 4% of the national total in 2022 to 6% by 2026, straining energy grids and challenging the objectives of SDG 7 (Affordable and Clean Energy).

- Carbon Emissions: The significant energy required for model training and inference contributes directly to carbon emissions, undermining progress toward SDG 13 (Climate Action). The full carbon footprint remains unclear due to limited corporate disclosure.

Water Consumption and Resource Management (SDG 6, SDG 12)

- Water for Cooling: Data centers utilize substantial volumes of water for cooling IT equipment to prevent overheating. This consumption places pressure on local water resources, directly impacting SDG 6 (Clean Water and Sanitation).

- Resource Efficiency: The lifecycle of AI hardware, from manufacturing to disposal, alongside its operational energy and water use, raises concerns about sustainable production and consumption patterns, which are central to SDG 12 (Responsible Consumption and Production).

Socio-Economic Impact Assessment and Alignment with SDGs

While generative AI offers economic benefits, it also introduces profound risks to social equity, justice, and economic stability. These human effects must be managed to ensure that technological advancement contributes positively to the SDGs.

Labor Market Disruption and Economic Growth (SDG 8)

- Job Displacement: Generative AI has the potential to automate tasks and replace workers across various sectors, posing a significant threat to SDG 8 (Decent Work and Economic Growth).

- Productivity Gains: Conversely, the technology can also augment human capabilities and increase productivity, which, if managed equitably, could support the objectives of SDG 8.

Information Integrity and Institutional Stability (SDG 16)

- Misinformation and Deepfakes: The capacity of generative AI to create convincing but false content, such as deepfakes, threatens to erode public trust, spread disinformation, and destabilize democratic processes, thereby undermining SDG 16 (Peace, Justice, and Strong Institutions).

- Malicious Use: The technology can be leveraged to enable malicious behavior and compromise national security, further challenging the stability and justice sought by SDG 16.

Safety, Equity, and Social Fabric (SDG 10)

- Unsafe and Biased Outputs: AI systems may produce inaccurate, undesirable, or biased outputs that can compromise public safety and reinforce existing societal biases, working against the goal of SDG 10 (Reduced Inequalities).

- Accountability Gaps: The complexity of generative AI and the lack of transparency from developers make it difficult to assign accountability for harmful outcomes, creating risks for society and culture.

Policy Options for Sustainable AI Governance

To mitigate the risks and harness the benefits of generative AI in alignment with the SDGs, policymakers can consider several strategic options. These options emphasize accountability, innovation, and multi-stakeholder collaboration, reflecting the principles of SDG 17 (Partnerships for the Goals).

-

Enhance Environmental Accountability and Reporting

To address challenges related to SDGs 6, 7, 12, and 13, a focus on data and transparency is crucial.

- Encourage or mandate industry reporting on key environmental metrics, including energy consumption, carbon emissions, and water usage for both training and deployment of AI models.

- Promote standardized methodologies for assessing the environmental lifecycle of AI systems, from hardware manufacturing to disposal.

-

Incentivize Sustainable Innovation

Foster the development of AI technologies that are inherently more aligned with environmental and social goals.

- Support research and development of more resource-efficient models, algorithms, and hardware to reduce the energy and water footprint of AI.

- Create incentives for developers who prioritize sustainability, safety, and fairness in their AI system designs.

-

Strengthen Human-Centric Governance Frameworks

To safeguard SDGs 8, 10, and 16, robust governance mechanisms are required.

- Promote the adoption of established frameworks, such as the GAO AI Accountability Framework and the NIST AI Risk Management Framework, to manage risks and increase public trust.

- Encourage developers to establish clear and enforceable acceptable use policies that prohibit the creation of harmful or malicious content.

-

Foster Multi-Stakeholder Collaboration and Standards

Leverage SDG 17 by building partnerships to create a globally coherent approach to AI governance.

- Facilitate knowledge-sharing platforms for industry, government, and academia to exchange best practices for managing the human and environmental effects of AI.

- Support standards-developing organizations in creating consensus-based technical standards and best practices for responsible AI development and deployment.

Analysis of Sustainable Development Goals in the Article

1. Which SDGs are addressed or connected to the issues highlighted in the article?

The article on Generative AI’s environmental and human effects connects to several Sustainable Development Goals (SDGs) by discussing the technology’s impact on natural resources, economic structures, and societal well-being. The following SDGs are addressed:

- SDG 6: Clean Water and Sanitation – The article explicitly mentions the high water consumption required to cool IT equipment for generative AI.

- SDG 7: Affordable and Clean Energy – The significant electricity consumption of data centers powering AI is a central theme.

- SDG 8: Decent Work and Economic Growth – The article highlights both the potential for AI to “dramatically increase productivity” and the risk that it “could replace workers.”

- SDG 9: Industry, Innovation, and Infrastructure – The discussion revolves around a key technological innovation (Generative AI) and its underlying infrastructure (data centers), with policy options focused on encouraging further innovation for efficiency.

- SDG 12: Responsible Consumption and Production – The article addresses the resource intensity (energy and water) of AI and points to a lack of corporate reporting on these environmental impacts.

- SDG 13: Climate Action – The energy consumption of AI is directly linked to “carbon emissions,” which is a core concern of climate action.

- SDG 16: Peace, Justice, and Strong Institutions – The risks of AI generating “inaccurate information” and “dangerous deepfakes” relate to undermining trust, spreading false information, and potentially enabling malicious behavior, which affects the integrity of institutions and public access to reliable information.

2. What specific targets under those SDGs can be identified based on the article’s content?

Based on the issues discussed, the following specific SDG targets can be identified:

- Target 6.4: By 2030, substantially increase water-use efficiency across all sectors.

- Explanation: The article’s concern that “the IT equipment that powers generative AI needs a lot of water” and that “Estimates of water consumption by generative AI are limited” directly relates to the need for greater efficiency in water use for this growing industry.

- Target 7.3: By 2030, double the global rate of improvement in energy efficiency.

- Explanation: The article highlights that “Generative AI uses significant energy” and that data center electricity consumption is projected to rise. The policy option to “encourage developers and researchers to create more resource-efficient models” aligns with improving energy efficiency.

- Target 8.2: Achieve higher levels of economic productivity through diversification, technological upgrading and innovation.

- Explanation: The article states that “Generative AI could dramatically increase productivity and transform workloads in many industries,” which speaks directly to this target’s goal of using technology to boost economic productivity.

- Target 8.5: By 2030, achieve full and productive employment and decent work for all.

- Explanation: The article identifies a significant risk to this target by noting that “generative AI could replace workers,” posing a challenge to maintaining full employment.

- Target 9.4: By 2030, upgrade infrastructure and retrofit industries to make them sustainable, with increased resource-use efficiency.

- Explanation: This target is relevant to the need to develop “more efficient hardware and infrastructure to reduce energy and water use” in data centers, as mentioned in the policy options.

- Target 12.6: Encourage companies, especially large and transnational companies, to adopt sustainable practices and to integrate sustainability information into their reporting cycle.

- Explanation: The article directly addresses this target by stating that “companies are generally not reporting details of these uses [energy and water]” and proposing a policy option to “Improve data collection and reporting” where developers would provide information on “energy consumption, carbon emissions, and water consumption.”

- Target 16.10: Ensure public access to information and protect fundamental freedoms, in accordance with national legislation and international agreements.

- Explanation: The risk of generative AI being used to “help spread false information” and create “dangerous deepfakes” directly threatens the integrity of public information, which is a cornerstone of this target.

3. Are there any indicators mentioned or implied in the article that can be used to measure progress towards the identified targets?

The article implies several indicators that could be used to measure progress:

- Indicator for Target 6.4 & 7.3: Change in water and energy efficiency.

- Evidence: The article implies the need for metrics on “water consumption” and “electricity consumption” of data centers. It provides a specific data point: “U.S. data center electricity consumption was approximately 4 percent of U.S. electricity demand in 2022 and could be 6 percent of demand in 2026.” Tracking these figures would serve as a direct indicator of resource efficiency.

- Indicator for Target 12.6: Number of companies publishing sustainability reports.

- Evidence: The article’s emphasis on the fact that “companies are generally not reporting details” and the policy option for developers to “provide information such as model details, infrastructure used… energy consumption, carbon emissions, and water consumption” points to corporate reporting as a key indicator of accountability and progress.

- Indicator for Target 13 (Climate Action): Amount of CO2 emissions.

- Evidence: The article mentions that environmental effect estimates have focused on “carbon emissions associated with generating that energy.” Measuring these emissions from AI operations would be a direct indicator for climate impact.

- Indicator for Target 8.5: Employment rates in sectors affected by AI automation.

- Evidence: The statement that “generative AI could replace workers” implies that an important metric for assessing its human impact would be tracking changes in employment and displacement rates within industries adopting this technology.

- Indicator for Target 16.10: Prevalence of misinformation and malicious content.

- Evidence: The identification of risks such as “inaccurate information,” “undesirable content,” “dangerous deepfakes,” and the “enabling of malicious behavior” suggests that tracking the volume and impact of such content would be an indicator of AI’s effect on the information ecosystem.

4. Summary Table of SDGs, Targets, and Indicators

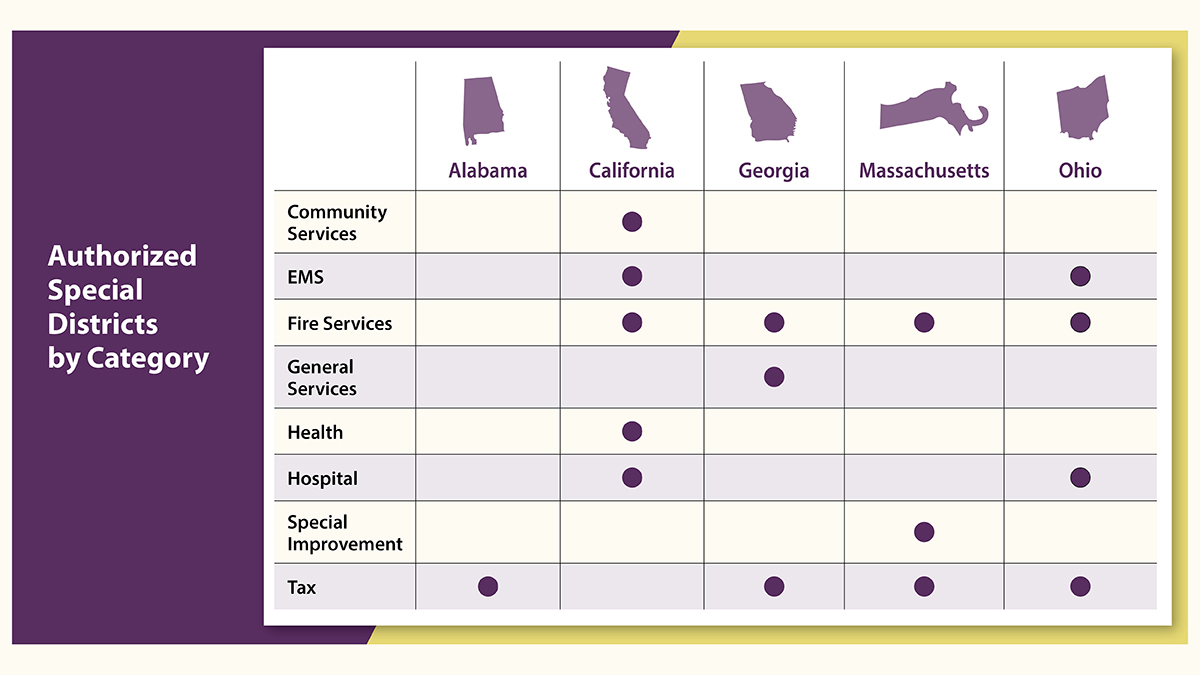

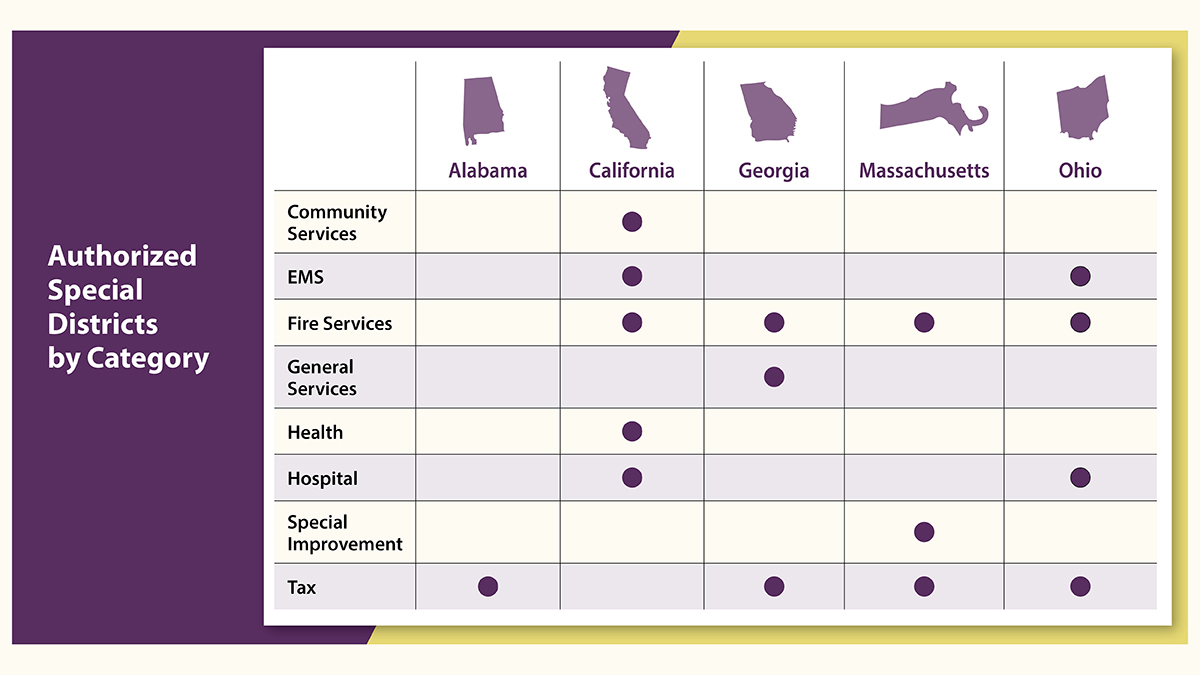

| SDGs | Targets | Indicators (Mentioned or Implied in the Article) |

|---|---|---|

| SDG 6: Clean Water and Sanitation | 6.4: Substantially increase water-use efficiency across all sectors. | Volume of water consumption by data centers powering generative AI. |

| SDG 7: Affordable and Clean Energy | 7.3: Double the global rate of improvement in energy efficiency. | Percentage of national electricity demand from data centers; energy consumption per AI model/task. |

| SDG 8: Decent Work and Economic Growth | 8.2: Achieve higher levels of economic productivity. 8.5: Achieve full and productive employment. |

Productivity gains in industries using AI; Rate of worker displacement due to AI automation. |

| SDG 9: Industry, Innovation, and Infrastructure | 9.4: Upgrade infrastructure and retrofit industries to make them sustainable. | Investment in and development of resource-efficient AI hardware and infrastructure. |

| SDG 12: Responsible Consumption and Production | 12.6: Encourage companies to adopt sustainable practices and integrate sustainability information into their reporting. | Number of AI companies reporting on energy consumption, water use, and carbon emissions. |

| SDG 13: Climate Action | General goal to combat climate change and its impacts. | Volume of carbon emissions associated with training and running generative AI models. |

| SDG 16: Peace, Justice, and Strong Institutions | 16.10: Ensure public access to information. | Prevalence and societal impact of AI-generated misinformation, deepfakes, and malicious content. |

Source: gao.gov

What is Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0